kubeadm搭建多主k8s集群

禁止swap分区,每台执行

1、临时禁止

swapoff -a

2、永久禁止

sed -i 's/\/dev\/mapper\/centos-swap/#\/dev\/mapper\/centos-swap/g' /etc/fstab

或者

vim /etc/fstab注释掉swap挂在

#/dev/mapper/centos-swap swap swap defaults 0 0关闭防火墙和selinux,每台执行

1、关闭防火墙

systemctl stop firewalld && systemctl disable firewalld

2、关闭selinux

sed -i 's/enforcing/disabled/g' /etc/selinux/config #永久关闭

setenforce 0 #临时关闭升级系统内核每台执行

1、下载

wget https://elrepo.org/linux/kernel/el7/x86_64/RPMS/kernel-lt-5.4.142-1.el7.elrepo.x86_64.rpm

wget https://elrepo.org/linux/kernel/el7/x86_64/RPMS/kernel-lt-devel-5.4.142-1.el7.elrepo.x86_64.rpm

2、安装

yum install kernel-lt-5.4.142-1.el7.elrepo.x86_64.rpm kernel-lt-devel-5.4.142-1.el7.elrepo.x86_64.rpm -y

3、调整默认内核启动

grub2-set-default "CentOS Linux (5.4.142-1.el7.elrepo.x86_64) 7 (Core)"

4、检查是否修改正确

grub2-editenv list

5、重启系统

reboot

6、删除以前的内核

yum remove kernel -y修改主机名,每台执行

hostnamectl set-hostname k8smaster1 第一台执行

hostnamectl set-hostname k8smaster2 第二台执行

hostnamectl set-hostname k8smaster3 第三台执行修改hosts文件 每台执行,注意修改ip

cat >>/etc/hosts <<EOF

192.168.36.128 k8smaster1

192.168.36.129 k8smaster2

192.168.36.130 k8smaster3

192.168.36.131 k8s-vip

EOF安装依赖包 每台执行

yum install conntrack ntpdate ipvsadm ipset sysstat libseccomp wget net-tools -y修改内核参数,每台执行

执行:modprobe br_netfilter

cat > /etc/sysctl.d/kubernetes.conf <<EOF

net.bridge.bridge-nf-call-ip6tables =1

net.bridge.bridge-nf-call-iptables =1

net.ipv4.ip_forward=1

#net.ipv4.tcp_tw_recycle=0

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

EOF

sysctl -p /etc/sysctl.d/kubernetes.conf修改最大打开进程数和文件数,每台执行

cat <<EOF >>/etc/sysctl.conf

net.core.somaxconn = 65535

fs.file-max = 20480000

fs.nr_open = 20480000

EOF

sysctl -p

cat <<EOF >>/etc/security/limits.conf

* soft nofile 5000000

* hard nofile 5000000

* soft nproc 65535

* hard nproc 65535

EOF

2、修改/etc/security/limits.d/20-nproc.conf文件

* soft nproc 4096

改为

* soft nproc 65535

sed -i s/4096/65535/g /etc/security/limits.d/20-nproc.conf

3、此时需要推出ssh工具,重新连接,让配置重新在新窗口生效更新系统时间 每台执行

ntpdate ntp.aliyun.com

echo '*/3 * * * * /usr/bin/ntpdate ntp.aliyun.com' >> /var/spool/cron/root为kube-proxy开启IPVS 每台执行

执行:modprobe br_netfilter

cat >/etc/sysconfig/modules/ipvs.modules<<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

EOF

chmod +x /etc/sysconfig/modules/ipvs.modules

sh /etc/sysconfig/modules/ipvs.modules && lsmod|grep -e ip_vs -e nf_conntrack_ipv4安装docker或者Containerd所有节点操作,只需要安装一个

安装docker

1、安装依赖

yum install -y yum-utils device-mapper-persistent-data lvm2

2、设置yum源

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

3、查看可以安装的版本

yum list docker-ce.x86_64 --showduplicates | sort -r

4、安装制定版本的docker

yum -y install docker-ce-19.03.9-3.el7 -y

5、安装最新版本docker

yum -y install docker-ce -y

6、修改默认存储路径

编辑vim /usr/lib/systemd/system/docker.service修改

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock

修改后

ExecStart=/usr/bin/dockerd -H fd:// --graph=/data/docker --containerd=/run/containerd/containerd.sock

注:/data为新存储路径

7、修改容器配置文件

mkdir /etc/docker/ 编辑/etc/docker/daemon.json文件,添加以下内容:

{

"registry-mirrors": ["https://jabq36oa.mirror.aliyuncs.com"],

"log-driver":"json-file",

"log-opts": {"max-size":"200m", "max-file":"10"},

"exec-opts": ["native.cgroupdriver=systemd"]

}

8、创建data目录

mkdir /data

9、启动docker

systemctl daemon-reload&&systemctl start docker&&systemctl enable docker&&systemctl status docker安装Containerd

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

yum list | grep containerd

yum install containerd.io.x86_64 -y

mkdir -p /etc/containerd

containerd config default > /etc/containerd/config.toml

sed -i "s#k8s.gcr.io#registry.cn-hangzhou.aliyuncs.com/google_containers#g" /etc/containerd/config.toml

sed -i 's#SystemdCgroup = false#SystemdCgroup = true#g' /etc/containerd/config.toml

cat <<EOF> /etc/crictl.yaml

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

编译/etc/containerd/config.toml添加镜像加速配置在[plugins."io.containerd.grpc.v1.cri".registry.mirrors]下添加,注意2个空格

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]

endpoint = ["https://registry.cn-hangzhou.aliyuncs.com"]

systemctl daemon-reload && systemctl enable containerd && systemctl restart containerd && systemctl status containerd 安装docker-compose

curl -L https://get.daocloud.io/docker/compose/releases/download/v2.0.1/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose

chmod +x /usr/bin/docker-composedocker部署haproxy在所有master上执行

docker pull haproxy:2.4.7-alpinedocker-compose配置文件

version: "3.8"

services:

haproxy:

image: haproxy:2.4.7-alpine

container_name: haproxy2

restart: always

ports:

- 13365:13365

- 1080:1080

volumes:

- /etc/localtime:/etc/localtime

- /root/haproxy.cfg:/usr/local/etc/haproxy/haproxy.cfg

networks:

haproxy:

ipv4_address: 172.18.0.2

networks:

haproxy:

ipam:

config:

- subnet: 172.18.0.0/16haproxy配置文件

global

log 127.0.0.1 local2

maxconn 60000

daemon

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

frontend mysql

bind *:16443

mode tcp

option tcplog

default_backend k8s_server

backend k8s_server

mode tcp

balance roundrobin

server server1 192.168.100.150:13365 weight 1 check inter 1s rise 2 fall 2

server server2 192.168.100.64:13365 weight 1 check inter 1s rise 2 fall 2

server server3 192.168.100.107:13365 weight 1 check inter 1s rise 2 fall 2

listen stats

stats enable

bind *:1080

stats uri /admin

stats auth admin:admin

stats hide-version

stats admin if TRUE

stats refresh 10sdocker 部署keepalived在所有master上执行

1、pull 镜像

docker pull osixia/keepalived:latest

2、打tag

docker tag osixia/keepalived:latest osixia/keepalived:2.0.20

3、删除镜像

docker rmi -f osixia/keepalived:latestmaster docker-compose 文件

version: "3.8"

services:

keepalived:

image: osixia/keepalived:2.0.20

container_name: keepalived2

cap_add:

- NET_ADMIN

- NET_BROADCAST

- NET_RAW

volumes:

- /etc/localtime:/etc/localtime

- /root/keepalived/haproxy.sh:/container/service/keepalived/haproxy.sh

- /root/keepalived/keepalived.conf:/usr/local/etc/keepalived/keepalived.conf

network_mode: host

restart: alwaysmaster keepalived配置文件

global_defs {

script_user root

enable_script_security

}

vrrp_script chk_haproxy {

script "/container/service/keepalived/haproxy.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

interface ens33

state BACKUP

virtual_router_id 51

priority 100

advert_int 1

nopreempt

unicast_src_ip 192.168.133.20 #master内外ip

unicast_peer {

192.168.133.21 #backup内外ip,如果有多个换行写

192.168.133.22

}

virtual_ipaddress {

192.168.133.29

}

authentication {

auth_type PASS

auth_pass 123456

}

track_script {

chk_haproxy

}

notify "/container/service/keepalived/assets/notify.sh"

}backup1 docker-compose 文件

version: "3.8"

services:

keepalived:

image: osixia/keepalived:2.0.20

container_name: keepalived2

cap_add:

- NET_ADMIN

- NET_BROADCAST

- NET_RAW

volumes:

- /etc/localtime:/etc/localtime

- /root/keepalived/keepalived.conf:/usr/local/etc/keepalived/keepalived.conf

- /root/keepalived/haproxy.sh:/container/service/keepalived/haproxy.sh

network_mode: host

restart: alwaysbackup1 keepalived配置文件

global_defs {

script_user root

enable_script_security

}

vrrp_script chk_haproxy {

script "/container/service/keepalived/haproxy.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

interface ens33

state BACKUP

virtual_router_id 51

priority 90

advert_int 1

nopreempt

unicast_src_ip 192.168.133.21 #备机内外ip

unicast_peer {

192.168.133.20 #master 内外ip

192.168.133.22

}

virtual_ipaddress {

192.168.133.22

}

authentication {

auth_type PASS

auth_pass 123456

}

track_script {

chk_haproxy

}

notify "/container/service/keepalived/assets/notify.sh"

}backup2 docker-compose 文件

version: "3.8"

services:

keepalived:

image: osixia/keepalived:2.0.20

container_name: keepalived2

cap_add:

- NET_ADMIN

- NET_BROADCAST

- NET_RAW

volumes:

- /etc/localtime:/etc/localtime

- /root/keepalived/keepalived.conf:/usr/local/etc/keepalived/keepalived.conf

- /root/keepalived/haproxy.sh:/container/service/keepalived/haproxy.sh

network_mode: host

restart: alwaysbackup2 keepalived配置文件

global_defs {

script_user root

enable_script_security

}

vrrp_script chk_haproxy {

script "/container/service/keepalived/haproxy.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

interface ens33

state BACKUP

virtual_router_id 51

priority 90

advert_int 1

nopreempt

unicast_src_ip 192.168.133.22 #备机内外ip

unicast_peer {

192.168.133.20 #master 内外ip

192.168.133.21

}

virtual_ipaddress {

192.168.133.29

}

authentication {

auth_type PASS

auth_pass 123456

}

track_script {

chk_haproxy

}

notify "/container/service/keepalived/assets/notify.sh"

}keepalived检测haproxy 脚本文件haproxy.sh 在所有安装keepalived上执行

#!/bin/bash

PORTS=$(netstat -lntp|grep 13365)

if [ -z "$PORTS" ];then

pkill -9 keepalived

fi

如果vip不能释放需要用下面脚本,其中192.168.133.29为vip

#!/bin/bash

PORTS=$(netstat -lntp|grep 16443)

if [ -z "$PORTS" ];then

pkill -9 keepalived

ip addr del 192.168.133.29 dev ens33

fi

授予可执行权限

chmod +x haproxy.sh

启动keepalived

docker-compose up -d 安装kubelet kubeadm kubectl 每台执行

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

安装指定版本

yum install kubelet-<version> kubeadm-<version> kubectl-<version> -y

如果使用containerd需要执行下面内容

sed -i 's#KUBELET_EXTRA_ARGS=#KUBELET_EXTRA_ARGS="--cgroup-driver=systemd --container-runtime=remote --container-runtime-endpoint=unix:///var/run/containerd/containerd.sock"#g' /etc/sysconfig/kubelet

安装最新版本

yum install kubelet kubeadm kubectl -y

systemctl daemon-reload && systemctl enable kubelet

2、创建目录在具有VIP的master上执行

mkdir /opt/kubernetes

cd /opt/kubernetes

3、生成默认kubeadm-config.yaml

kubeadm config print init-defaults >kubeadm-config.yaml

4、修改kubeadm-config.yaml内容

增加以下内容

maxPods: 248 <<<<----POD限制

imageGCHighThresholdPercent: 80 <<<----最高磁盘使用到90%的时候删除

imageGCLowThresholdPercent: 70 <<<-----和上面结合使用

enforceNodeAllocatable:

kubeReserved:

memory: 1Gi <<<-----NODE节点上预留1G内存

cpu: 1000m <<<----cpu 1个

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.2.152

bindPort: 6443

nodeRegistration:

criSocket: unix:///var/run/containerd/containerd.sock

imagePullPolicy: IfNotPresent

name: k8smaster1

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controlPlaneEndpoint: "192.168.2.152:6443"

controllerManager: {}

dns: {}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: 1.24.0

networking:

dnsDomain: cluster.local

podSubnet: "10.244.0.0/16"

serviceSubnet: 10.96.0.0/12

scheduler: {}

maxPods: 248

imageGCHighThresholdPercent: 80

imageGCLowThresholdPercent: 70

enforceNodeAllocatable:

kubeReserved:

memory: 1Gi

cpu: 1000m

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs

注意,如果1.22配置ipvs

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs

如果初始化时忘记加这些参数可以再kubelet手动上添加,然后重启kubelet

vim /var/lib/kubelet/config.yaml

再最后添加以下参数,就是把上面的复制下来可以了

maxPods: 248 <<<<----POD限制

imageGCHighThresholdPercent: 80 <<<----最高磁盘使用到90%的时候删除

imageGCLowThresholdPercent: 70 <<<-----和上面结合使用

enforceNodeAllocatable:

kubeReserved:

memory: 1Gi <<<-----NODE节点上预留1G内存

cpu: 1000m <<<----cpu 1个

systemctl daemon-reload && systemctl restart kubelet.service

1、列出kubeadm安装k8s时需要的镜像

kubeadm config images list --config kubeadm-config.yaml

2、初始化在具有VIP的master上执行

kubeadm init --config=kubeadm-config.yaml --upload-certs |tee kubeadm-init.log

3、master上执行

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

4、在其他master节点执行在上一master生成的加入master节点类容

kubeadm join k8s-vip:16443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:6a51d4139659fb71cf73cb21ab7184bf3a96f2c57b7f20a6708b0efdd6033db7 \

--control-plane --certificate-key 1f3133c082d91e550e0b6e0c4ec6ddfb8f4a60c171fc7f41602b8c79b78ff479

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config重新修改haproxy配置文件,加入其他master节点,每台安装了haproxy机器上执行

backend kubernetes-apiserver

mode tcp

balance roundrobin

server k8smaster1 192.168.36.128:6443 check

server k8smaster2 192.168.36.129:6443 check

server k8smaster3 192.168.36.130:6443 check安装calico网络插件,在阿里云里面名为calico-etcd.yaml

cd /root/k8s-ha-install/calico/

sed -i 's#etcd_endpoints: "http://<ETCD_IP>:<ETCD_PORT>"#etcd_endpoints: "https://192.168.2.152:2379"#g' calico-etcd.yaml

ETCD_CA=`cat /etc/kubernetes/pki/etcd/ca.crt | base64 | tr -d '\n'`

ETCD_CERT=`cat /etc/kubernetes/pki/etcd/server.crt | base64 | tr -d '\n'`

ETCD_KEY=`cat /etc/kubernetes/pki/etcd/server.key | base64 | tr -d '\n'`

sed -i "s@# etcd-key: null@etcd-key: ${ETCD_KEY}@g; s@# etcd-cert: null@etcd-cert: ${ETCD_CERT}@g; s@# etcd-ca: null@etcd-ca: ${ETCD_CA}@g" calico-etcd.yaml

sed -i 's#etcd_ca: ""#etcd_ca: "/calico-secrets/etcd-ca"#g; s#etcd_cert: ""#etcd_cert: "/calico-secrets/etcd-cert"#g; s#etcd_key: "" #etcd_key: "/calico-secrets/etcd-key" #g' calico-etcd.yaml

POD_SUBNET="10.244.0.0/12" 注意这里是有替换不成功,需要手动修改

sed -i 's@# - name: CALICO_IPV4POOL_CIDR@- name: CALICO_IPV4POOL_CIDR@g; s@# value: "192.168.0.0/16"@value: '"${POD_SUBNET}"'@g' calico-etcd.yaml

修改apiserver地址,就是value的值

env:

- name: KUBERNETES_SERVICE_HOST

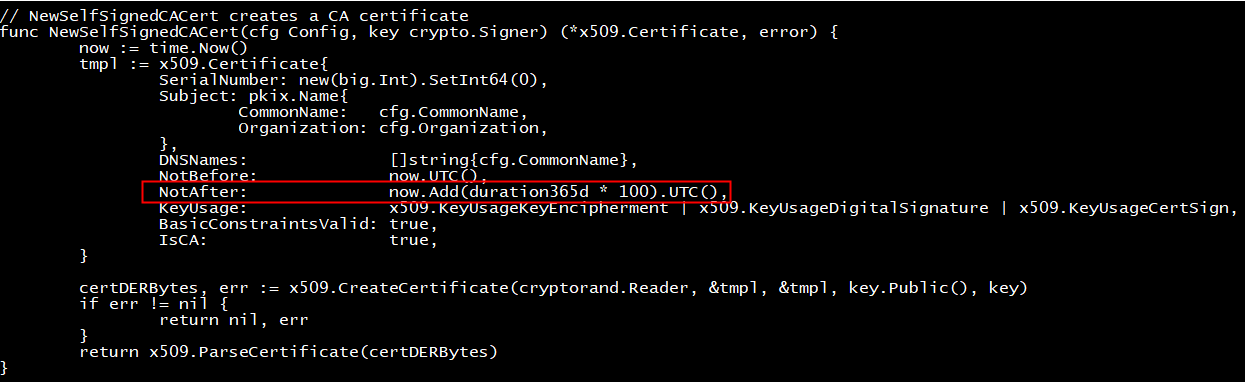

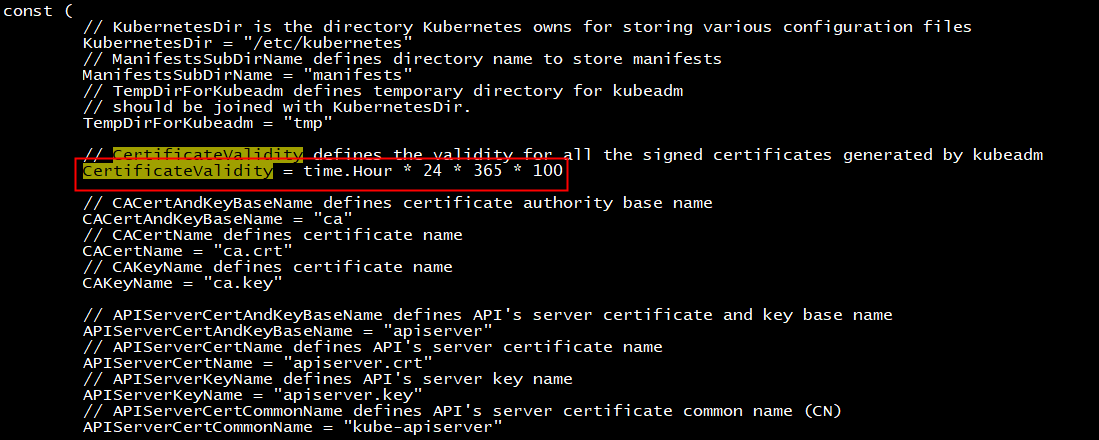

value: "192.168.2.230"修改证书时间

此处修改为100年

cd /root/kubernetes-release-1.22

vim ./staging/src/k8s.io/client-go/util/cert/cert.go

vim ./cmd/kubeadm/app/constants/constants.go

退回到/root/kubernetes编译

make WHAT=cmd/kubeadm GOFLAGS=-v

7、备份/usr/bin/kubeadm

cp /usr/bin/kubeadm /usr/bin/kubeadm_bak

8、备份/etc/kubernetes/pki

cp -r /etc/kubernetes/pki /etc/kubernetes/pki_bak

9、编译完成后,拷贝/root/kubernetes/_output/bin/kubeadm到/usr/bin/kubeadm

\cp /root/kubernetes/_output/bin/kubeadm /usr/bin/

10、生成证书,其中kubeadm-config.yaml为搭建集群时配置文件

kubeadm alpha certs renew all --config=kubeadm-config.yaml

新版:kubeadm certs renew all --config=kubeadm-config.yaml

11、重启 kube-apiserver, kube-controller-manager, kube-scheduler and etcd

docker ps |grep kube-apiserver|grep -v pause|awk '{print $1}'|xargs -i docker restart {}

ba047a5ccb94

docker ps |grep kube-controller-manager|grep -v pause|awk '{print $1}'|xargs -i docker restart {}

983e0a805a71

docker ps |grep kube-scheduler|grep -v pause|awk '{print $1}'|xargs -i docker restart {}

ea5c4dea15ee

docker ps |grep etcd|grep -v pause|awk '{print $1}'|xargs -i docker restart {}

12、其他节点master执行,需要使用编译生成的kubeadm

kubeadm certs renew all

2、重启kube-apiserver, kube-controller-manager, kube-scheduler and etcd

docker ps |grep kube-apiserver|grep -v pause|awk '{print $1}'|xargs -i docker restart {}

ba047a5ccb94

docker ps |grep kube-controller-manager|grep -v pause|awk '{print $1}'|xargs -i docker restart {}

983e0a805a71

docker ps |grep kube-scheduler|grep -v pause|awk '{print $1}'|xargs -i docker restart {}

ea5c4dea15ee

docker ps |grep etcd|grep -v pause|awk '{print $1}'|xargs -i docker restart {}

查看证书

kubeadm certs check-expiration如果加入节点是token过期 --ttl 表是建一个用不过期的token

kubeadm token create --print-join-command --ttl=0k8s修改nodeport默认端口

1、修改配置文件,如果是多个master,每台master都需要修改

vim /etc/kubernetes/manifests/kube-apiserver.yaml 添加配置

- --service-node-port-range=1-65535 ##要在--service-cluster-ip-range 下添加

如下图

2、重启kubelet

systemctl daemon-reload

systemctl restart kubelet

- 感谢你赐予我前进的力量

赞赏者名单

因为你们的支持让我意识到写文章的价值🙏

本文是原创文章,采用 CC BY-NC-ND 4.0 协议,完整转载请注明来自 运维小白

评论

匿名评论

隐私政策

你无需删除空行,直接评论以获取最佳展示效果