k8s网关入口haproxy-ingress部署

什么是haproxy-ingress

HAProxy Ingress 是一个为 Kubernetes 集群设计的官方入口控制器,它将高性能的 HAProxy 负载均衡器无缝集成到 Kubernetes 中,作为集群外部流量的统一入口

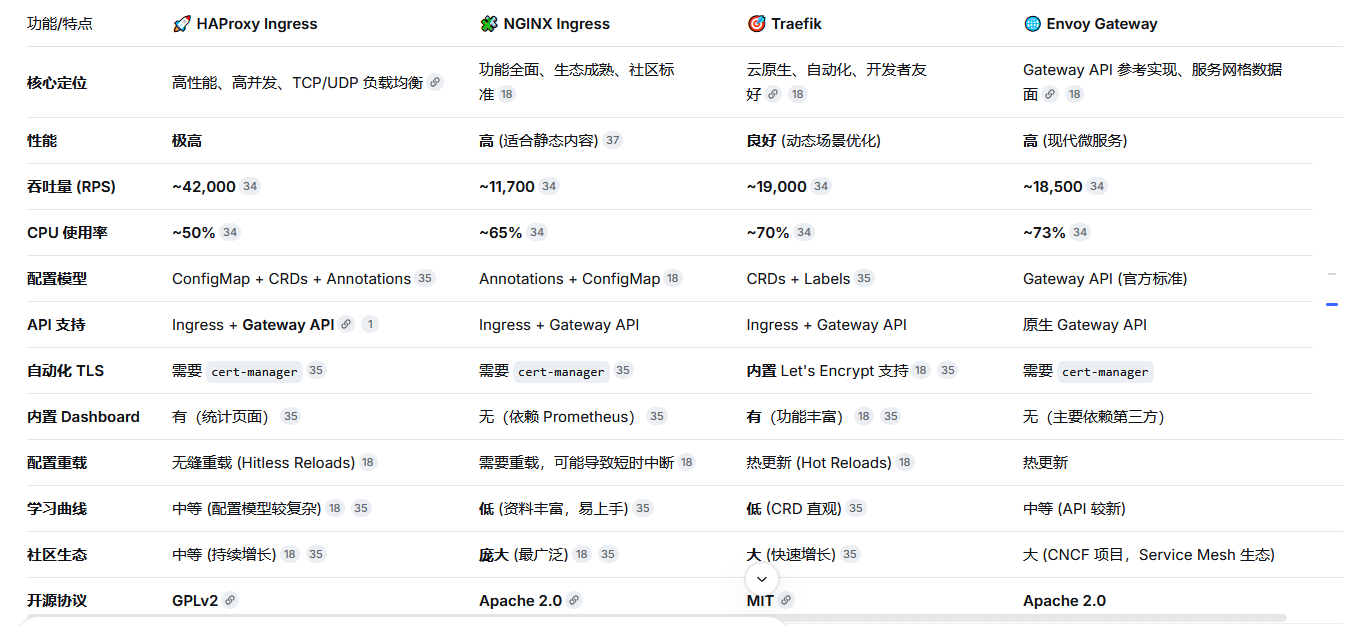

为什么选择 HAProxy

HAProxy Ingress 的最大亮点在于其背后强大的 HAProxy 引擎,它以高性能和高可靠性著称。

根据 HAProxy Technologies 发布的第三方基准测试,在与 NGINX、Envoy、Traefik 等主流 Ingress 控制器的对比中,HAProxy Ingress 表现出了压倒性的性能优势

haproxy-ingress官网地址

https://haproxy-ingress.github.io/为节点打上标签

kubectl lable node nodename haproxy-ingress=run部署haproxy-ingress

---

apiVersion: v1

kind: Namespace

metadata:

name: ingress-controller

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: ingress-controller

namespace: ingress-controller

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: ingress-controller

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- extensions

- networking.k8s.io

resources:

- ingressclasses

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- extensions

- networking.k8s.io

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- get

- list

- watch

- apiGroups:

- gateway.networking.k8s.io

resources:

- gateways

- httproute

- gatewayclasses

- httproutes

- tcproutes

- tlsroutes

- udproutes

- backendpolicies

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses/status

verbs:

- patch

- update

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: ingress-controller

namespace: ingress-controller

rules:

- apiGroups:

- coordination.k8s.io

resources:

- leases

verbs:

- get

- create

- update

- apiGroups:

- ""

resources:

- configmaps

- pods

- secrets

- namespaces

verbs:

- get

- apiGroups:

- ""

resources:

- configmaps

verbs:

- get

- update

- apiGroups:

- ""

resources:

- configmaps

verbs:

- create

- apiGroups:

- ""

resources:

- endpoints

verbs:

- get

- create

- update

- apiGroups: ["apps"]

resources: ["replicasets"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: ingress-controller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ingress-controller

subjects:

- kind: ServiceAccount

name: ingress-controller

namespace: ingress-controller

- apiGroup: rbac.authorization.k8s.io

kind: User

name: ingress-controller

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: ingress-controller

namespace: ingress-controller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: ingress-controller

subjects:

- kind: ServiceAccount

name: ingress-controller

namespace: ingress-controller

- apiGroup: rbac.authorization.k8s.io

kind: User

name: ingress-controller

---

apiVersion: v1

kind: ConfigMap

metadata:

name: haproxy-ingress

namespace: ingress-controller

data:

max-connections: "400000"

forwardfor: "ignore"

syslog-endpoint: "stdout"

syslog-format: "raw"

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

run: haproxy-ingress

name: haproxy-ingress

namespace: ingress-controller

spec:

replicas: 1

selector:

matchLabels:

run: haproxy-ingress

template:

metadata:

labels:

run: haproxy-ingress

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: run

operator: In

values:

- haproxy-ingress

topologyKey: "kubernetes.io/hostname"

nodeSelector:

run: haproxy-ingress

serviceAccountName: ingress-controller

containers:

- name: haproxy-ingress

image: docker.io/jcmoraisjr/haproxy-ingress:v0.15.1

imagePullPolicy: IfNotPresent

args:

- --configmap=$(POD_NAMESPACE)/haproxy-ingress

- --sort-backends

ports:

- name: http

containerPort: 80

hostPort: 80

- name: https

containerPort: 443

hostPort: 443

- name: stat

containerPort: 1936

livenessProbe:

httpGet:

path: /healthz

port: 10253

resources:

limits:

cpu: 6000m

memory: 14336Mi

requests:

cpu: 100m

memory: 100Mi

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

---

apiVersion: v1

kind: Service

metadata:

name: haproxy-ingress

namespace: ingress-controller

spec:

ports:

- name: http

port: 80

protocol: TCP

targetPort: 80

- name: https

port: 443

protocol: TCP

targetPort: 443

- name: stat

port: 1936

protocol: TCP

targetPort: 1936

selector:

run: haproxy-ingress

type: ClusterIP

部署exporter监控haproxy-ingress

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

run: haproxy-exporter

name: haproxy-exporter

namespace: monitor

spec:

replicas: 1

selector:

matchLabels:

run: haproxy-exporter

template:

metadata:

labels:

run: haproxy-exporter

spec:

containers:

- name: haproxy-exporter

image: quay.io/prometheus/haproxy-exporter:v0.11.0

imagePullPolicy: IfNotPresent

args:

- '--haproxy.scrape-uri=http://haproxy-ingress.ingress-controller.svc.cluster.local:1936/haproxy?stats;csv'

- --web.listen-address=:9101

ports:

- name: metrics

containerPort: 9101

protocol: TCP

livenessProbe:

httpGet:

path: /

port: metrics

readinessProbe:

httpGet:

path: /

port: metrics

resources:

limits:

cpu: 2000m

memory: 2048Mi

requests:

cpu: 100m

memory: 100Mi

---

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9101"

labels:

app.kubernetes.io/component: metrics

name: haproxy-exporter

namespace: monitor

spec:

ports:

- name: metrics

port: 9101

targetPort: 9101

selector:

run: haproxy-exporter

type: ClusterIP部署waf根据业务需求部署

如果要启用waf需要再haproxy-ingress 部署yaml中configmap处加上waf配置modsecurity-endpoints: 172.20.216.246:12345,其中172.20.216.246是waf 服务svc的ip,12345是它的端口,配置如下,创建ingress时需要加上haproxy-ingress.github.io/waf: "modsecurity"

apiVersion: v1

data:

modsecurity-endpoints: 172.20.216.246:12345

...

kind: ConfigMap

$ kubectl create -f - <<EOF

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

haproxy-ingress.github.io/waf: "modsecurity"

name: echo

spec:

rules:

- host: echo.domain

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: echo

port:

number: 8080

EOF

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

run: coraza-spoa

name: coraza-spoa

namespace: ingress-controller

spec:

replicas: 1

selector:

matchLabels:

run: coraza-spoa

template:

metadata:

labels:

run: coraza-spoa

spec:

containers:

- name: coraza-spoa

image: jcmoraisjr/coraza-spoa:experimental

ports:

- containerPort: 12345

name: spop

protocol: TCP

resources:

limits:

cpu: 2000m

memory: 4096Mi

requests:

cpu: 200m

memory: 150Mi

livenessProbe:

failureThreshold: 3

initialDelaySeconds: 30

periodSeconds: 5

successThreshold: 1

tcpSocket:

port: 12345

timeoutSeconds: 4

volumeMounts:

- name: coraza-config

mountPath: /config.yaml

subPath: config.yaml

readOnly: true

volumes:

- name: coraza-config

configMap:

name: coraza-config

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coraza-config

namespace: ingress-controller

data:

config.yaml: |

bind: :12345

default_application: default_app

applications:

default_app:

include:

- /etc/coraza-spoa/coraza.conf

- /etc/coraza-spoa/crs-setup.conf

- /etc/coraza-spoa/rules/*.conf

transaction_ttl: 60000

transaction_active_limit: 100000

log_level: info

log_file: /dev/stdout

---

apiVersion: v1

kind: Service

metadata:

name: coraza-spoa

namespace: ingress-controller

labels:

app: coraza

spec:

ports:

- name: coraza

port: 12345

protocol: TCP

targetPort: 12345

selector:

run: coraza-spoa

type: ClusterIP蓝绿/金丝雀发布

创建deployment是需要加上蓝绿/金丝雀发布配置run: bluegreen,group: svc名字如下

部署game-gate0

apiVersion: apps/v1

kind: Deployment

metadata:

name: game-gate0

spec:

replicas: 1

selector:

matchLabels:

name: game-gate0

run: bluegreen

group: game-gate0

template:

metadata:

labels:

name: game-gate0

run: bluegreen

group: game-gate0

spec:

containers:创建game-gate0 svc

apiVersion: v1

kind: Service

metadata:

name: game-gate0

labels:

app: game-gate0

spec:

ports:

- name: game-gate0

port: 9002

protocol: TCP

targetPort: 9002

- name: gatesvrd10

port: 8888

protocol: TCP

targetPort: 8888

selector:

name: game-gate0

type: ClusterIP部署game-gate1

apiVersion: apps/v1

kind: Deployment

metadata:

name: game-gate1

spec:

replicas: 1

selector:

matchLabels:

name: game-gate1

run: bluegreen

group: game-gate1

template:

metadata:

labels:

name: game-gate1

run: bluegreen

group: game-gate1

spec:

containers:部署game-gate1 svc

apiVersion: v1

kind: Service

metadata:

name: game-gate1

labels:

app: game-gate1

spec:

ports:

- name: game-gate1

port: 9002

protocol: TCP

targetPort: 9002

- name: gatesvrd10

port: 8888

protocol: TCP

targetPort: 8888

selector:

name: game-gate1

type: ClusterIP创建ingress使用的svc

apiVersion: v1

kind: Service

metadata:

name: game-gate

labels:

app: game-gate

spec:

ports:

- name: game-gate

port: 9002

protocol: TCP

targetPort: 9002

- name: game-gate-1

port: 8888

protocol: TCP

targetPort: 8888

selector:

run: bluegreen

type: ClusterIP创建ingress

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: game-gate

annotations:

kubernetes.io/ingress.class: haproxy

haproxy-ingress.github.io/balance-algorithm: leastconn

haproxy-ingress.github.io/maxconn-server: "300000"

haproxy-ingress.github.io/blue-green-deploy: group=game-gate0=1,group=game-gate1=0

haproxy-ingress.github.io/blue-green-mode: deploy

spec:

tls:

- hosts:

- gamews.xxxxx.cc

secretName: xxxxx.cc

rules:

- host: gamews.xxxxx.cc

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: game-gate

port:

number: 8888蓝绿/金丝雀自动切换脚本

#!/bin/bash

gate_yaml=/root/haproxy-ingress/game-gate.yaml

gate0_weight=$(cat $gate_yaml |grep blue-green-deploy|awk '{print $2}'|awk -F "," '{print $1}'|awk -F "=" '{print $3}')

gate0_pod=$(kubectl get po |grep gate0|awk '{print $1}')

gate1_weight=$(cat $gate_yaml |grep blue-green-deploy|awk '{print $2}'|awk -F "," '{print $2}'|awk -F "=" '{print $3}')

gate1_pod=$(kubectl get po |grep gate1|awk '{print $1}')

canary(){

if [ "$gate0_weight" == "1" ];then

echo -e "金丝雀发布中切换server:game-gate0流量到server:game-gate1"

kubectl delete po $gate1_pod && sleep 40

sed -i s'#group=game-gate0=1#group=game-gate0=0#g' $gate_yaml

sed -i s'#group=game-gate1=0#group=game-gate1=1#g' $gate_yaml

kubectl apply -f $gate_yaml

elif [ "$gate0_weight" == "0" ];then

echo -e "金丝雀发布中切换server:game-gate1流量到server:game-gate0"

kubectl delete po $gate0_pod && sleep 40

sed -i s'#group=game-gate0=0#group=game-gate0=1#g' $gate_yaml

sed -i s'#group=game-gate1=1#group=game-gate1=0#g' $gate_yaml

kubectl apply -f $gate_yaml

else

echo -e "金丝雀发布失败"

fi

}

canary- 感谢你赐予我前进的力量

赞赏者名单

因为你们的支持让我意识到写文章的价值🙏

本文是原创文章,采用 CC BY-NC-ND 4.0 协议,完整转载请注明来自 运维小白

评论

匿名评论

隐私政策

你无需删除空行,直接评论以获取最佳展示效果